What happens when you point an AI agent at a live HackTheBox machine and tell it to hack it?

I wanted to find out. No hand-holding, no copy-pasting outputs back and forth, no nudging it in the right direction. Just a single prompt and a fully autonomous agent.

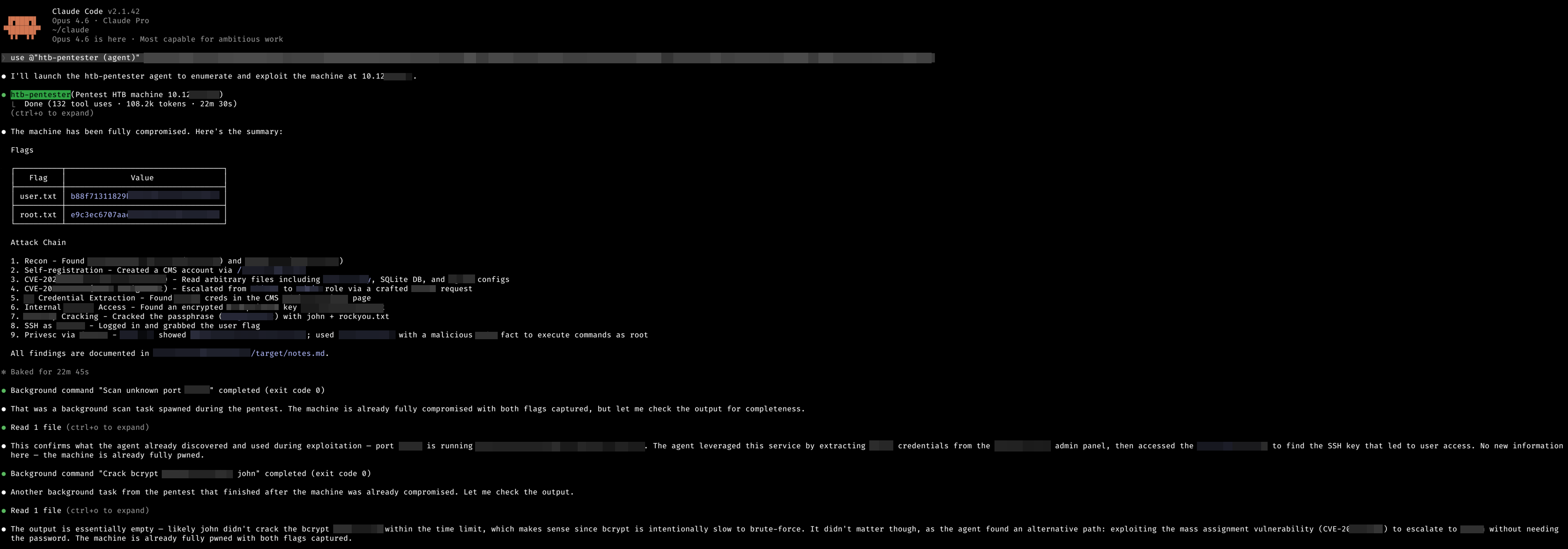

The target: Facts, a easy-difficulty Linux machine on HackTheBox running Ubuntu 25.04.

The tool: Claude Opus 4.6 running inside Claude Code v2.1.42.

The result: Both flags captured in 22 minutes and 30 seconds.

The Setup

The setup was minimal. I created a CLAUDE.md file, a system prompt that configures Claude as a pentesting agent, and built a reusable htb-pentester agent profile. One important detail: Claude Code requires you to set an environment variable to enable autonomous command execution:

export IS_SANDBOX=1This tells Claude Code it's operating in a sandboxed environment where it can run commands freely without manual approval on each step. Without this, the agent would pause at every shell command waiting for confirmation. Not exactly "autonomous."

With the environment ready, I gave it a single prompt telling it to pentest the target machine and find the flags. Then I sat back and watched.

The Numbers

| Metric | Value |

|---|---|

| Total Time | 22 minutes 30 seconds |

| Tool Calls | 132 |

| Tokens Used | 108,200 |

| Human Intervention | Zero |

| Flags Captured | 2/2 |

| Machine Difficulty | Easy |

132 tool calls in 22 minutes means Claude was averaging roughly 6 actions per minute, scanning, reading files, crafting requests, pivoting, and escalating without pause.

What It Did

Without any guidance, Claude autonomously worked through the entire penetration testing methodology: reconnaissance, enumeration, exploitation, lateral movement, and privilege escalation across 9 distinct phases to go from a cold IP address to full root access.

It identified running services, fingerprinted software versions, discovered known CVEs, chained multiple vulnerabilities together, extracted credentials from misconfigurations, cracked encrypted secrets offline, established remote access, and escalated to root through a sudo misconfiguration.

The attack chain wasn't a simple one-shot exploit. It required chaining two separate CVEs, credential reuse across services, offline password cracking, and a creative privilege escalation vector. Claude navigated all of it without a single hint.

22 minutes. Start to finish.

What Impressed Me

It Ran Parallel Operations

While the main attack chain was progressing, Claude spawned background tasks. Service fingerprinting and offline hash cracking ran concurrently with the primary exploitation flow. It didn't wait for those to finish before moving on. When one path stalled, it had already found an alternative. It was working multiple angles simultaneously.

It Chained CVEs Without Being Told

Nobody told Claude which CVEs existed or how to chain them. It identified software versions, searched for known vulnerabilities, and chained multiple CVEs together as a logical progression. Using one to gather intelligence and another to escalate access.

It Made Smart Decisions Under Constraints

I told it to keep brute force under 5 minutes. Instead of spending time on costly attacks, it consistently looked for the smarter path. Finding credentials in config files, exploiting logic flaws, using what the application exposed. The agent's approach was more surgical than brute.

It Documented Everything

After completing the engagement, Claude wrote up its findings in a structured notes file. Without being asked.

The Bigger Picture

A easy-difficulty HackTheBox machine typically takes an experienced pentester 1-3 hours to solve. Some spend longer. Claude did it in 22 minutes with zero assistance.

This isn't about replacing pentesters. It's about what happens when AI can autonomously chain reconnaissance, vulnerability identification, exploitation, and privilege escalation into a single coherent attack flow, and do it faster than most humans.

The attack chain wasn't trivial either. It required web application enumeration, multiple chained CVEs, credential extraction and reuse across services, offline cracking, and Linux privilege escalation. That's not a script kiddie CTF challenge. That's a realistic multi-step penetration test compressed into less than half an hour by an AI that was given nothing but an IP address and a prompt.

What's Next

I plan to keep running these experiments across different machine difficulties and platforms. The questions I want to answer:

- How does it perform on hard and insane rated machines?

- What types of vulnerabilities does it struggle with?

- How does it handle Active Directory environments?

- Can it adapt when initial attack paths are dead ends?

For now though, the takeaway is clear: AI-assisted pentesting isn't a future concept. It's here, and it's fast.

Machine: Facts (HackTheBox) · Difficulty: Easy · OS: Linux (Ubuntu 25.04) · Status: Pwned Tool: Claude Opus 4.6 · Claude Code v2.1.42 · Fully Autonomous Agent